Artificial-Intelligence-Driven Decision-Making in the Clinic. Ethical, Legal, and Societal Challenges

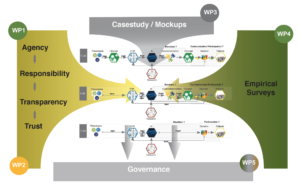

AI is on everyone’s lips. Applications of AI are becoming increasingly relevant in the field of clinical decision-making. While many of the conceivable use cases of clinical AI still lay in the future, others have already begun to shape practice. The project vALID provides a normative, legal, and technical analysis of how AI-driven clinical Decisions Support Systems could be aligned with the ideal of clinician and patient sovereignty. It examines how concepts of trustworthiness, transparency, agency, and responsibility are affected and shifted by clinical AI—both on a theoretical level, and with regards to concrete moral and legal consequences. One of the hypotheses of vALID is that the basic concepts of our normative and bioethical instruments are shifted. Depending on the embedding of the system in decision-making processes, the modes of interaction of the actors involved are transformed. vALID thus investigates the following questions: who or what guides clinical decision-making if AI appears as a new (quasi-)agent on the scene? Who is responsible for errors? What would it mean to make AI-black-boxes transparent to clinicians and patients? How could the ideal of trustworthy AI be attained in the clinic?

The analysis is grounded in an empirical case study, which deploys mock-up simulations of AI-driven Clinical Decision Support Systems to systematically gather attitudes of physicians and patients on variety of designs and use cases. The aim is to develop an interdisciplinary governance perspective on system design, regulation, and implementation of AI-based clinical systems that not only optimize decision-making processes, but also maintain and reinforce the sovereignty of physicians and patients.